At end of day, the goal of your security program should be to chart a path to an optimal set of capabilities. What exactly constitutes "optimal" will in fact vary from org to org. We know this is true because otherwise there would already be a settled "best practice" framework to which everyone would align. That said, there are a lot of common pieces that can be leveraged in identifying the optimal program attributes for your organization.

]]> The BasicsFirst and foremost, your security program must account for basic security hygiene, which creates the basis for arguing legal defensibility; which is to say, if you're not doing the basics, then your program can be construed insufficient, exposing your organization to legal liability (a growing concern). That said, what exactly constitutes "basic security hygiene"?

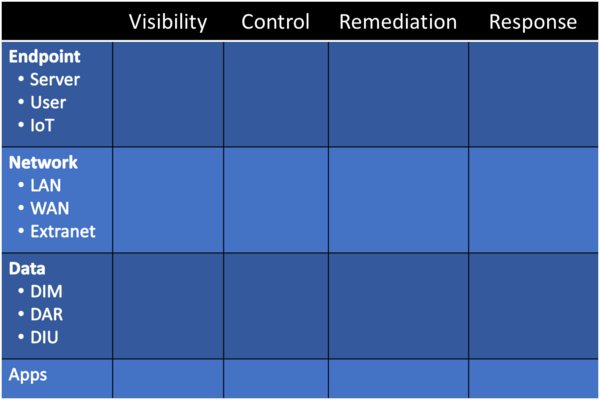

There are a couple different ways to look at basic security hygiene. For starters, you can look at it be technology grouping:

- Network

- Endpoint

- Data

- Applications

- IAM

- etc.

However, listing out specific technologies can become cumbersome, plus it doesn't necessarily lend itself well to thinking about security architecture and strategy. A few years ago I came up with an approach that looks like this:

More recently, I learned of the OWASP Cyber Defense Matrix, which takes a similar approach to mine above, but mixing it with the NIST Cybersecurity Framework.

Overall, I like the simplicity of the CDM approach as I think it covers sufficient bases to project a legally defensible position, while also ensuring a decent starting point that will cross-map to other frameworks and standards depending on the needs of your organization (e.g., maybe you need to move to ISO 27001 or complete a SOC 1/2/3 certification).

Org Culture

One of the oft-overlooked, and yet insanely important, aspects of designing an approach to optimal security for your organization is to understand that it must exist completely within the organization's culture. After all, the organization is comprised of people doing work, and pretty much everything you're looking to do will have some degree of impact on those people and their daily lives.

As such, when you think about everything, be it basic security hygiene, information risk management, or even behavioral infosec, you must first consider how it fits with org culture. Specifically, you need to look at the values of the organization (and its leadership), as well as the behaviors that are common, advocated, and rewarded.

If what you're asking people to do goes against the incentive model within which they're operating, then you must find a way to either better align with those incentives or find a way to change the incentives such that they encourage preferred behaviors. We'll talking more about behavioral infosec below, so for this section the key takeaway is this: organizational culture creates the incentive model(s) upon which people make decisions, which means you absolutely must optimize for that reality.

For more on my thoughts around org culture, please see my post "Quit Talking About "Security Culture" - Fix Org Culture!"

Risk Management

Much has been said about risk management over the past decade+, whether it be PCI DSS advocating for a "risk-based approach" to vulnerability management, or updates to the NIST Risk Management Framework, or various advocation by ISO 27005/31000 or proponents of a quantitative approach (such as the FAIR Institute).

The simply fact is that, once you have a reasonable base set of practices in place, almost everything else should be driven by a risk management approach. However, what this means within the context of optimal security can vary substantially, not the least being due to staffing challenges. If you are a small-to-medium-sized business, then your reality is likely one where you, at best, have a security leader of some sort (CISO, security architect, security manager, whatever) and then maybe up to a couple security engineers (doers), maybe someone for compliance, and then most likely a lot of outsourcing (MSP/MSSP/MDR, DFIR retainer, auditors, contractors, consultants, etc, etc, etc).

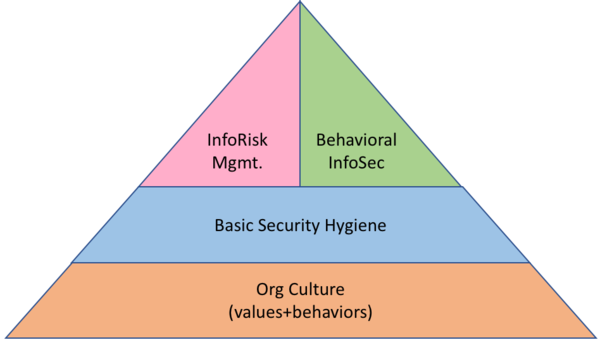

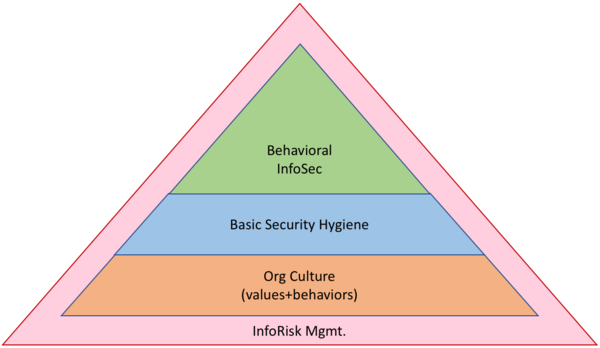

Risk management is not your starting point. As noted above, there are a number of security practices that we know must be done, whether that be securing endpoints, data, networks, access, or what-have-you. Where we start needing risk management is when we get beyond the basics and try to determine what else is needed. As such, the crux of optimal security is having an information risk management capability, which means your overall practice structure might look like this:

However, don't get wrapped around the axel too much on how the picture fits together. Instead, be aware that your basics come first (out of necessity), then comes some form of risk mgmt., which will include gaining a deep understanding of org culture.

Behavioral InfoSec

The other major piece of a comprehensive security program is behavioral infosec, which I have talked about previously in my posts "Introducing Behavioral InfoSec" and "Design For Behavior, Not Awareness." In these posts, and other places, I talk about the imperative to key in on organizational culture, and specifically look at behavior design as part of an overall security program. However, there are a couple key differences in this approach that set it apart from traditional security awareness programs.

1) Behavioral InfoSec acknowledges that we are seeking preferred behaviors within the context of organizational culture, which is the set of values of behaviors promoted, supported, and rewarded by the organization.

2) We move away from basic "security awareness" programs like annual CBTs toward practices that seek measurable, lasting change in behavior that provide positive security benefit.

3) We accept that all security behaviors - whether it be hardening or anti-phishing or data security (etc) - must either align with the inherent cultural structure and incentive model, or seek to change those things in order to heighten the motivation to change while simultaneously making it easier to change.

To me, shifting to a behavioral infosec mindset is imperative for achieving success with embedding and institutionalizing desired security practices into your organization. Never is this more apparent than in looking at the Fogg Behavior Model, which explains behavior thusly:

In writing, it says that behavior happens when three things come together: motivation, ability, and a trigger (prompt or cue). We can diagram behavior (as above) wherein motivate is charted on the Y-axis from low to high, ability is charted on the X-axis from "hard to do" to "easy to do," and then a prompt (or trigger) that falls either to the left or right of the "line of action," which means the prompt itself is less important than one's motivation and the ease of the action.

We consistently fail in infosec by not properly accounting for incentive models (motivation) or by asking people to do something that is, in fact, too difficult (ability; that is, you're asking for a change that is hard, maybe in terms of making it difficult to do their job, or maybe just challenging in general). In all things, when we think about information risk mgmt. and the kinds of changes we want to see in our organizations beyond basic security hygiene, it's imperative that we also under the cultural impact and how org culture will support, maybe even reward, the desired changes.

Overall, I would argue that my original pyramid diagram ends up being more useful insomuch as it encourages us to think about info risk mgmt. and behavioral infosec in parallel and in conjunction with each other.

Putting It All Together

All of these practices areas - basic security hygiene, info risk mgmt, behavioral infosec - ideally come together in a strategic approach that achieves optimal security. But, what does that really mean? What are the attributes, today, of an optimal security program? There are lessons we can learn from agile, DevOps, ITIL, Six Sigma, and various other related programs and research, ranging from Deming to Senge and everything in between. Combined, "optimal security" might look something like this:

ConsciousObviously, much, much more can be said about the above, but that's fodder for another post (or a book, haha). Instead, I present the above as a starting point for a conversation to help move everyone away from some of our traditional, broken approaches. Now is the time to take a step back and (re-)evaluate our security programs and how best to approach them.]]>

- Generative (thinking beyond the immediate)

- Mindful (thinking of people and orgs in the whole)

- Discursive (collaborative, communicative, open-minded)Lean

- Efficient (minimum steps to achieve desired outcome)

- Effective (do we accomplish what we set out to do?)

- Managed (haphazard and ad hoc are the enemy of lasting success)Quantified

- Measured (applying qualitative or quantitative approaches to test for efficiency and effectiveness)

- Monitored (not just point-in-time, but watched over time)

- Reported (to align with org culture, as well as to help reform org culture over time)Clear

- Defined (what problem is being solved? what is the desired outcome/impact? why is this important?)

- Mapped (possibly value stream mapping, possibly net flows or data flows, taking time to understand who and what is impacted)

- Reduced (don't bite off too much at once, acknowledge change requires time, simplify simplify simplify)Systematic

- Systemic understanding (the organization is a complex organism that must work together)

- Automated where possible (don't install people where an automated process will suffice)

- Minimized complexity (perfect is the enemy of good, and optimal security is all about "good enough," so seek the least complex solutions possible)